UAE Launches Pioneering AI Security Lab to Certify Trusted Systems

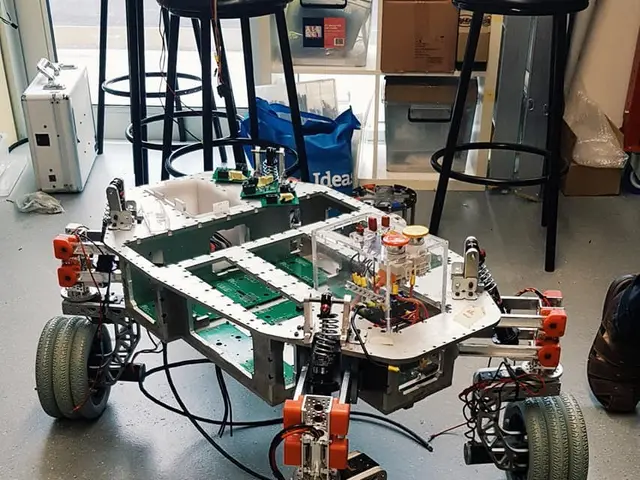

A new AI security lab has launched in the UAE to assess and certify artificial intelligence systems. The facility, already operational, will test tens of thousands of AI agents each year against strict security and compliance standards. It was created through a partnership between the UAE Cyber Security Council, Open Innovation AI, Cisco, and Emircom. The lab evaluates AI models across six critical areas: model security, threat defence, data integrity, supply-chain security, agent autonomy, and regulatory compliance. Systems that meet its requirements will receive a national certification mark, allowing UAE-based developers to bring trusted products to market.

Assessments follow international benchmarks, including ISO 42001, MITRE ATLAS, NIST AI RMF, and OWASP frameworks. The infrastructure combines Cisco’s secure networking, NVIDIA GPU-powered computing, and Open Innovation AI’s software platform. Dr. Mohamed Al-Kuwaiti, Head of Cyber Security for the UAE Government, described the lab as a sovereign capability for building secure and trustworthy AI. It will serve federal and local government bodies, critical infrastructure operators, and private sector organisations seeking certification.

The lab is now fully operational under the governance of the UAE Cybersecurity Council. Its assessments will help ensure AI systems meet both national and global security standards. Developers and organisations can use the facility to validate their models before deployment.

Read also:

- India's Agriculture Minister Reviews Sector Progress Amid Heavy Rains, Crop Areas Up

- Sleep Maxxing Trends and Tips: New Zealanders Seek Better Rest

- Over 1.7M in Baden-Württemberg at Poverty Risk, Emmendingen's Housing Crisis Urgent

- Life Expectancy Soars, But Youth Suicide and Substance Abuse Pose Concern